Defect-Free by Design: What Michael Fagan Taught Me About AI Agents

Key Takeaways

- Inspect the Process, Not Just the Product: Michael Fagan's inspection process taught us that every defect is a symptom — the disease is upstream. This principle is the missing piece in AI orchestration.

- Agents Don't Learn Between Cycles: Without structured feedback, agents repeat the same process defects at machine speed. The agentic fragility loop isn't a bug in the agent — it's a bug in the factory.

- The CMM Level 5 Agent Organisation: By embedding mandatory review roles — Product Manager, System Architect, QA Engineer, and Process Improvement Specialist — into every feature's task plan, we create a self-improving production system.

- Two Feedback Loops: A fast loop corrects within a phase (architect adjusts the plan in real time). A slow loop corrects between phases (classified defect data drives upstream process improvements). Both are essential.

- Plan Broadly, Implement Narrowly, Replan Aggressively: The only thing you know about a plan when you put it together is that it will be wrong. But you must have a plan to know where you're wrong.

In the mid-1990s, I was a young software engineer at GEC Marconi in Sydney, working on a combat system project. The organisation was a brilliant cradle of software engineering, pursuing CMM Level 5 certification with a genuine aspiration to be recognised as world-best — and in truth, it probably was. I was young and impressionable, and fortunate to be surrounded by generous and intelligent technical leaders who invested deeply in the people around them.

They trained us as CMM Assessors. They embedded the Fagan Inspection Process into the combat system project as a core discipline. And then, they brought Michael Fagan himself over from the United States to Sydney to teach us the process from the horse's mouth. The project was already using his inspection process, so having the man himself in the house was something we were keen to participate in.

One insight from that experience has stayed with me for thirty years, and it quietly shapes everything I build today:

The inspection isn't about finding bugs in the code. It's about finding bugs in the process that produced the code.

Every defect is a symptom. The disease is always upstream. Find the disease, fix the process, and that entire class of defect stops being introduced. This is the principle that separates organisations that fight fires from organisations that prevent them.

In the era of AI agents, this principle hasn't just held up — it possibly might be one of the single most important ideas in orchestration.

The Problem: Agents Don't Learn Between Cycles

In a previous article, we described the "Agentic Loop of Fragility" — the phenomenon where AI agents, left to their own devices, enter a recursive cycle of patching. Each fix conforms to the existing (flawed) architecture, creates a side effect, and triggers another fix. The code compiles, the tests pass, but the system becomes progressively more brittle.

Through a Fagan lens, this problem comes into sharper focus. The agent adding a fifteenth if/else branch to a growing conditional isn't making a code error — it's revealing a process defect. The process — the prompt, the context, the decomposition — didn't equip the agent to recognise that the architectural pattern had reached its scaling limit.

I experienced this firsthand while building the agent interfaces in our own orchestration tool. The agent was happy to extend a branching conditional for every new interface type. Each individual branch was correct, well-tested, and consistent with the existing codebase. But the pattern was scaling linearly in complexity when it should have been scaling close to zero. The real solution was a plugin system — register a new interface, implement the contract, and the orchestrator doesn't need to know the specifics.

The agent didn't make that call. I did. Not because the agent was incapable of building a plugin system — once I identified the need, we collaborated to design and implement it beautifully. The agent failed because nothing in its process told it to question the pattern. The defect origin wasn't the implementation. It was the architectural context provided to the agent.

Without structured feedback, this same class of process defect repeats on every cycle, at machine speed. The agents get faster at doing the wrong thing.

Fagan's Model: A Fifty-Year-Old Solution to a 2026 Problem

For those unfamiliar with the Fagan Inspection Process, it was developed by Michael Fagan at IBM in the 1970s and became one of the most rigorously validated methods in software engineering history. But its power is widely misunderstood. Most people think of it as "code review." It is not. It is a process improvement methodology that uses inspections as its data collection mechanism.

The core elements are:

- Defined roles with specific lenses. An inspection isn't a room full of people looking at the same thing the same way. Each participant has a distinct responsibility — moderator, reader, reviewer, author — and each applies a specific analytical lens. This prevents groupthink and ensures coverage.

- Classified defect origins. When a defect is found, the question isn't just "what's wrong?" It's "where did this defect originate, and what phase of the process should have prevented it?" A coding defect that originated in a vague requirement isn't a coding problem — it's a requirements problem.

- Causal analysis. Defect data is aggregated and analysed to identify patterns. Which phase is injecting the most defects? Which types of artefacts are most error-prone? Where is the process failing systematically?

- Upstream correction. The findings drive changes to the process itself — not just the product. Standards are updated. Templates are refined. Training is adjusted. The goal is to eliminate the source of defects, not just catch them downstream.

The key insight: inspection data is process telemetry, not just a quality gate.

In a human organisation, Fagan inspections were expensive. Each one required scheduling, preparation, and the time of multiple senior engineers. You had to be selective about what you inspected. With AI agents, the economics have inverted entirely. We can run the equivalent of a Fagan inspection on every unit of work because the cost is measured in tokens, not calendar time and senior engineer hours.

The CMM Level 5 Agent Organisation

In a previous article, we described using Plan-Do-Check-Act (PDCA) cycles to manage agent execution. Most implementations of the "Check" phase are essentially: does it work? Do the tests pass? That's quality control at the output level.

What Fagan teaches us is that we need quality control at the process level. And the way to achieve that is to embed mandatory review roles into every feature decomposition — not as optional steps, but as structurally enforced tasks in the work breakdown structure.

When an agent decomposes a feature into implementation tasks, it must also insert:

1. A Product Review task — a Product Manager agent that checks alignment. This is the Fagan moderator. Before any implementation work begins, this agent validates that the feature decomposition actually serves the stated intent. It catches scope defects before a single token of implementation effort is spent.

2. A System Architecture task — an Architect agent that tempers the task plan with structural concerns. This is the Fagan reader and reviewer. It examines the proposed implementation tasks, identifies architectural risks, adds tasks to address them, and adjusts dependencies to handle relationships that the initial decomposition missed. This is where the plugin-system-versus-if/else decision would be caught — the architect recognises the pattern's scaling limit and restructures the plan before implementation.

3. A Quality Assurance task — a QA Engineer agent that reviews the telemetry from the implementation phase. This is the Fagan inspector and metrics collector. It examines structured data: tokens consumed, rework cycles, self-corrections made by implementation agents, and any architectural flags raised during execution. Critically, it doesn't just note "this bead required rework" — it classifies why. Was the prompt underspecified? Was context missing from the parent? Did the architect's decomposition miss a dependency?

4. A Process Improvement task — a specialist agent that reviews the classified defect data in light of the production system itself. This is Fagan's causal analysis, automated. It identifies systemic patterns across beads: suboptimal prompt templates, insufficient context inheritance, decomposition patterns that consistently generate high rework. It then recommends upstream corrections for the next phase.

These tasks aren't optional. They are structurally enforced by BP6 — our deterministic orchestration layer — through the WBS and its dependency logic. Every feature gets the full treatment. The cost is built into the price of every feature, and that's acceptable because in a mature organisation, this overhead is what prevents the whole system from degrading over time.

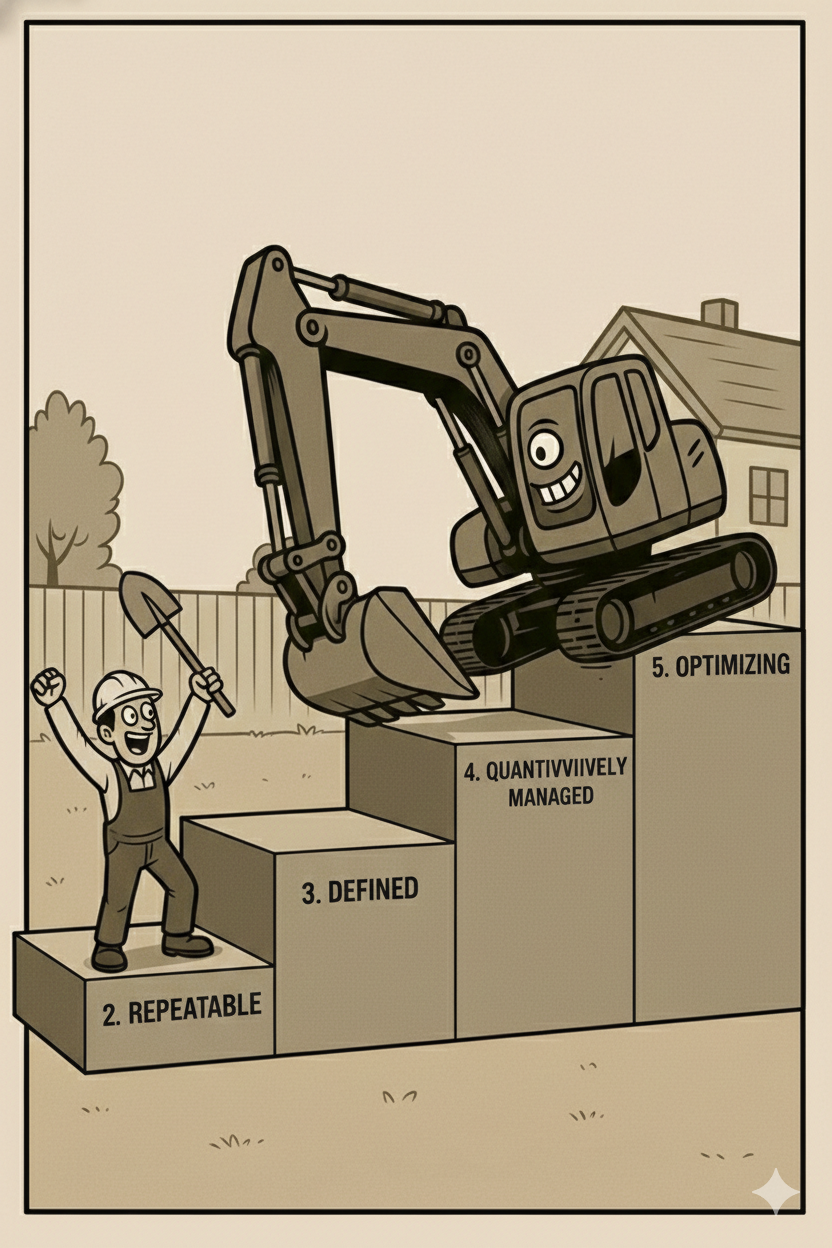

This is, in essence, an agent model of a CMM Level 5 software organisation.

Two Feedback Loops: Tactical and Strategic

The system operates through two feedback loops at different speeds.

The fast loop operates within a phase. The Architect adjusts the WBS in real time — inserting new tasks, adding dependencies, restructuring relationships as new information emerges. Implementation agents self-correct in-train when they encounter issues (and this self-correction rate is itself a metric — a high rate signals gaps in the upstream planning). Additive changes are handled in-flight. The plan absorbs new knowledge without stopping.

However, the fast loop has a boundary. If a change is structural — "this entire decomposition approach is wrong, we need to reorganise these beads" — that's not an in-flight adjustment. That's a replan trigger. The architect can extend the plan mid-phase but not fundamentally reshape it. This is the circuit breaker we described previously, but encoded as a structural rule rather than relying on a human to notice.

The slow loop operates between phases. The QA Engineer and Process Improvement agents review what happened across the completed phase. Defect origins are classified. Patterns are identified. And the findings feed forward into the next phase as upstream corrections: adjusted prompt templates, enriched context packages, improved decomposition patterns.

The Fagan connection is direct. The fast loop is the inspection finding defects. The slow loop is the causal analysis and process correction. One without the other is incomplete — inspections without causal analysis are just expensive bug-finding, and causal analysis without inspections has no data to work from.

Plan Broadly, Implement Narrowly, Replan Aggressively

These two loops are held together by a phased lifecycle that I've come to think of as: Plan broadly, implement narrowly, replan aggressively.

When I was younger, I had a very Taylorian view of engineering. If I could just specify it well enough, plan it in enough detail, the execution would follow. I learned over time — often the hard way — that the real world is simply too complex for this to hold, at least for any novel or complex endeavour. In practice, we learn and discover as we do. Assumptions prove wrong. Dependencies we didn't know about reveal themselves. The terrain looks different at ground level than it did from the map. Systems engineers used to call this "emergent properties".

But I also learned that having a broad, well-thought-out plan was still a recipe for success. The further out a plan extends, the more it becomes a strategy rather than a specification. And that's fine. The only thing you know about a plan when you put it together is that it will be wrong. But you must have a plan to know where you're wrong.

This philosophy maps directly onto the agent lifecycle:

- Plan broadly. Use progressive elaboration. Define the vision and high-level structure. Accept — embrace, even — that early assumptions will be incomplete. The plan is a framework for discovery, not a contract for delivery.

- Implement narrowly. Agents work on tightly scoped beads with explicit entry criteria, exit criteria, bounded context, and defined outputs. They don't swim in the whole codebase or the whole project. They work on bead 4.2.3.7 with exactly the context that bead requires.

- Replan aggressively. Phase boundaries are replanning opportunities. The classified defect data from the Fagan-style inspections feeds forward. The plan for phase two is informed by everything phase one discovered — including the things it got wrong. Phase one isn't a failure if it reveals wrong assumptions. It's intelligence gathering. The cost is tokens. The value is classified knowledge about where the process needs to improve.

The Data Model: Beads as Inspection Records

The infrastructure that makes all of this practical is BP6 and its underlying bead-based data model. Every bead in the WBS stores structured outputs: not just the work product, but the telemetry. Tokens consumed. Rework cycles. Self-corrections. Architectural flags raised during execution.

Bead numbers serve as the primary key in a structured database. When an agent session is initiated, the deterministic coordinator provides exactly the context that bead requires — the persona prompt, the specific inputs from upstream beads, the entry criteria, the exit criteria, and any relevant outputs from related beads.

This means the QA and Process Improvement agents aren't guessing about waste or quality. They're querying real, structured data across the entire WBS. "Beads under feature 4.2 averaged 3.1 rework cycles compared to 1.4 under feature 4.3 — what's different about how 4.2 was decomposed?" That's a question an agent can actually answer when the data is there.

This is Fagan's classified defect data — but machine-readable, accumulated automatically, and queryable at any level of the hierarchy.

The Cost/Benefit Threshold

One critical consideration remains: not every finding warrants action. A process improvement agent that proposes architectural overhauls on every cycle would paralyse the system. One that only flags what's obvious adds no value.

The answer is a tiered approach, driven by cost/benefit analysis. Lightweight corrections — adjusting a prompt template, enriching a context package — are cheap and can happen frequently. Changes to decomposition patterns or agent configurations are medium-cost and require stronger evidence. Full architectural refactors — the kind that restructure the WBS itself — only trigger when the accumulated evidence crosses a high bar.

The Process Improvement agent must also look beyond the code. Suboptimal prompts, insufficient context inheritance, agents consistently producing work that requires multiple revision cycles — these are factory defects, not product defects. Fixing them improves every future cycle, not just the current one. This is Deming's insight, operationalised: the system that builds the system needs its own quality loop.

The Physics Haven't Changed

Across this series of articles, we keep returning to the same theme: the fundamental "physics" of building reliable systems hasn't changed because we have AI. Reducing variance. Eliminating waste. Tracing defects to their origin. Hierarchical decomposition. Structured feedback loops.

Michael Fagan figured out the inspection process fifty years ago, rescuing IBM as a manufacturing company in the process. Deming articulated the quality philosophy before that. Systems Engineering formalised decomposition and progressive elaboration decades ago. These aren't relics of a slower era — they're proven solutions to the exact problems we're facing today, just at a different speed.

What's changed is the engine. We can now run Fagan inspections on every unit of work. We can execute PDCA cycles in seconds. We can decompose, generate, inspect, classify, correct, and replan at a pace that would have been economically absurd in a human-only organisation.

We're not reinventing the wheel. We're finally giving it the road it was built for.

References

- Michael Fagan's Inspection Process: Originally developed at IBM in the 1970s. Fagan, M.E. (1976). "Design and Code Inspections to Reduce Errors in Program Development." IBM Systems Journal, 15(3).

- Previous Articles in This Series:

- JOMC: Why AI Agents Can't Refactor Themselves — The problem of agentic fragility loops.

- The Physics of AI Orchestration — PDCA, progressive elaboration, and production system architecture.

- The Foreman's Desk: Schedule Discipline in the Age of AI — BP6 and deterministic orchestration.

- W. Edwards Deming: Out of the Crisis (1986). MIT Press.

- The Ralph Loop: Geoffrey Huntly's iterative AI execution pattern. ghuntley.com/loop/

- Gas Town: Steve Yegge's vision for AI agent orchestration. Read the full thesis on Medium.