JOMC (Just One More Compile): Why AI Agents Can’t Refactor Themselves

Key Takeaways

- The Interpolation Trap: AI agents are world-class pattern matchers but lack the ability to sense technical debt. They optimize for the local fix while sacrificing the global health of the system.

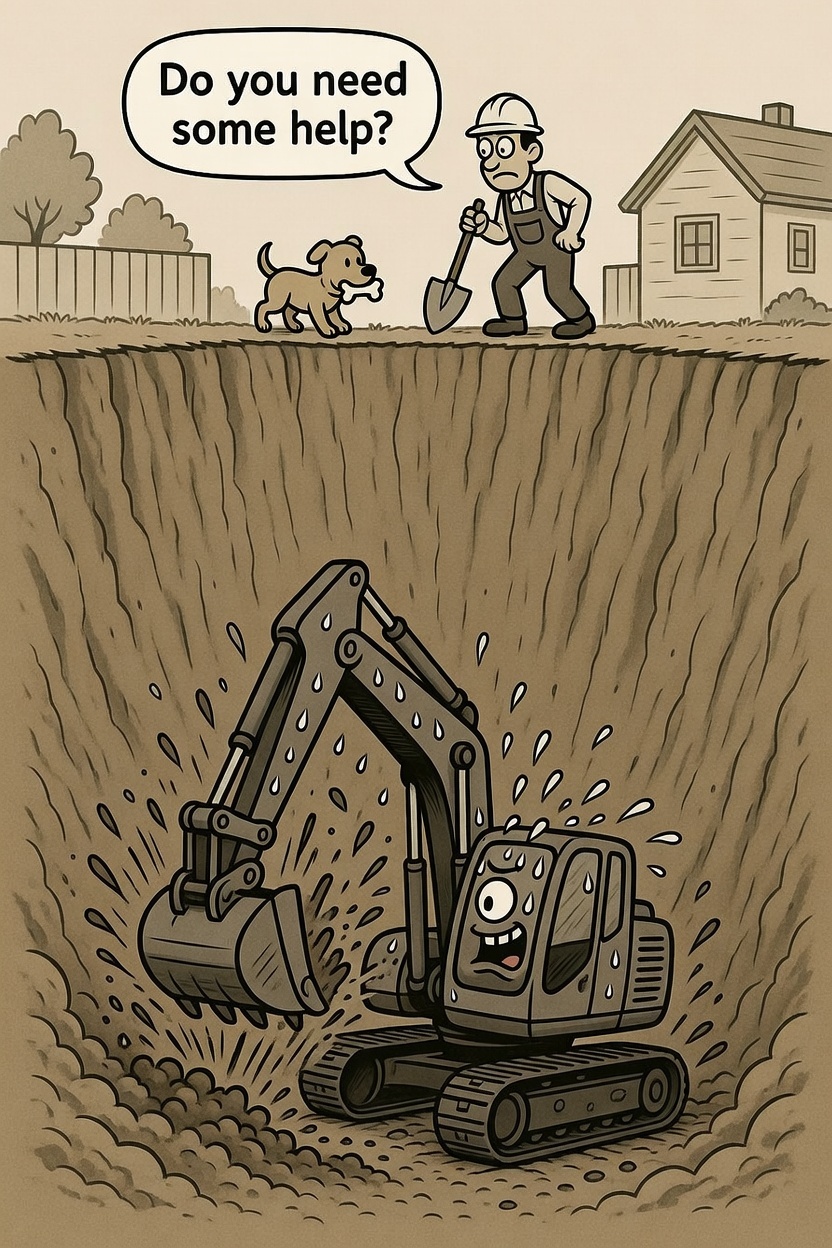

- The Agentic Loop of Fragility: Without oversight, agents can enter a recursive loop of "fragile fixing," where each patch creates a side effect that requires another patch, leading to a "Frankenstein" architecture.

- The Human Circuit Breaker: The most valuable skill for a developer is recognizing the inflection point where a strategy must be abandoned. Humans must act as the circuit breaker to stop the "fix" cycle.

- Strategic Refactoring: AI is the engine to carry out massive refactors, but the human is the navigator who knows when it's time to turn the wheel and change the architectural map.

We’ve all been there in the "pre-AI" days. You’re staring at a bug, convinced that just one more compile, one more tiny tweak to that if statement, will fix it. Eventually, a senior dev walks by, looks at your screen for five seconds, and says: "Stop. You’re patching a sinking ship. We need to rewrite this module."

In the era of AI orchestration, this "fix-fix-fix" cycle hasn't disappeared—it’s just moved into hyper-drive.

The Interpolation Trap

AI agents are world-class pattern matchers. If your codebase has a specific way of handling data validation, an agent will replicate that pattern perfectly. This is interpolation—filling in the gaps of a known system.

The danger arises when that pattern is no longer fit for purpose. An agent doesn't necessarily smell technical debt. It won't tell you, "Hey, adding this 15th case to the switch statement is making this class unmaintainable." It will simply add the 15th case because that is what the existing pattern dictates. It optimizes for the Local Optimum (fixing the ticket) while sacrificing the Global Optimum (the health of the system).

The Agentic Loop of Fragility

Left to their own devices, agents can enter a recursive loop of "fragile fixing."

- Step 1: Agent identifies a bug.

- Step 2: Agent applies a patch that conforms to the current (flawed) architecture.

- Step 3: The patch creates a side effect because the architecture is over-leveraged.

- Step 4: Agent "fixes" the side effect with another patch.

Before you know it, you have a "Frankenstein" architecture. The code compiles, the tests pass, but the system is brittle. The agent is working harder, not smarter, because it lacks the authority to say, "This strategy is failing."

The Human as the Architectural Circuit Breaker

This is where systems thinking—and human intervention—becomes the "North Star."

The most valuable skill for a developer in 2026 isn't writing the code; it’s recognizing the inflection point where the current strategy must be abandoned. You must act as the Circuit Breaker to stop the agent's "fix" cycle and provide a new architectural direction.

Directing the Pivot

The beauty of this approach is that once you, the human, identify the need for a restructure, you don't have to do the heavy lifting.

- Identify the Debt: Recognize the agent is spinning its wheels.

- Define the New Strategy: "We are moving from this monolithic helper to a strategy pattern."

- Orchestrate the Change: Use the agent to carry out the massive, multi-file refactor.

The AI is the engine that carries out the move, but you are the navigator who knows when to turn the wheel.

Discipline Over Speed

We argue that organizing work is a solved problem. The challenge with AI isn't getting it to work; it's stopping it from working in the wrong direction.

Refactoring isn't just about clean code; it’s about strategic divergence. Don't let your agents "fix" your application into a corner. Step in, break the cycle, and give them a better map to follow.